©2021 Reporters Post24. All Rights Reserved.

We have something very interesting for our readers today. We recently got our hands on some internal documents that confirm Intel is preparing to launch a next-generation AI training accelerator with monstrous capabilities later this year. Dubbed the Habana Gaudi 2 platform, it will take aim at NVIDIA’s new datacenter products (launching later this week) for deep learning and aims to provide a higher value (from a TCO perspective) alternative. Keep in mind the documents were dated earlier this year, and the launch window *could* have moved during this time so take that with a grain of salt.

Intel gets ready to ‘play offense’ with Habana Gaudi 2 deep learning processor, launching in May 2022

Intel’s Habana offerings are split into two categories: AI training and AI inference. The Gaudi platform is the AI training platform and Goya is the AI inference platform. The Gaudi 2 chip will succeed the older Gaudi chips offered by Intel. While the documentation we acquired only talked about a Gaudi 2 chip, [caution: editorial speculation] it is possible that there is a Goya 2 chip in the works as well [/editorial speculation] – since AI training and inference is usually a combo offering by most vendors. Vendors can utilize Intel’s Gaudi platform using the Amazon AWS EC2DL1 instance, where it already offers 40% better performance per dollar than NVIDIA-based instances.

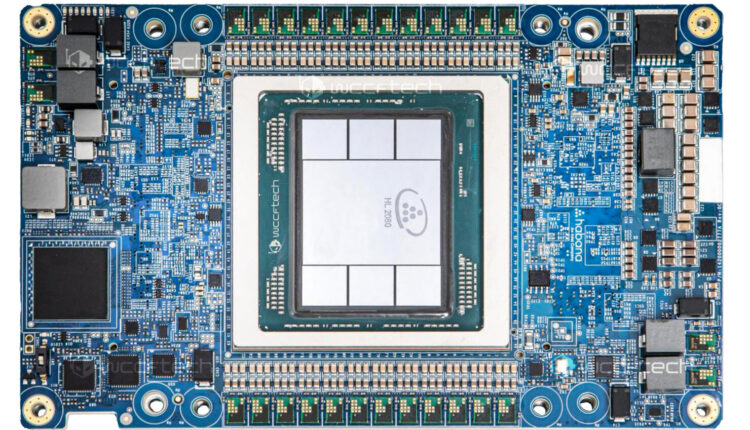

We also have the first-ever picture of the Intel HL 2080 AI chip based on the Gaudi 2 platform:

While we did not receive any additional information on the Gaudi 2 HL 2080 chip, we can see that ith as 6 HBM dies. If these are of the HBM2 type, this is a total of 48 GB memory onboard. If it is the HBM3 type, then the sky is the limit and it could be 96 GB (or more). We can also see the soldering pads for a total of 24 VRMs for what appears to be a 12+12 phase design. This is roughly 50% more power than the older Habana chip which had a 8+8 design for a total of 16 VRMs. Assuming these are standard HBM sized chips, it also looks like Intel has increased the die size by 50% over the last generation of Gaudi processors. I am not going to do a die-size estimation here and will leave that to my colleagues to inevitably do.

To get a good idea of the rough transistor count increase, however, we also need to know the process the chip is built on. We know that the Intel Gaudi 2 HL 2080 chip is built on ‘a’ 7nm process based on this interview by Eitan Medina, COO of Habana Labs. Unfortunately, this doesn’t really help us much because 7nm could be referring to the N7 process on TSMC, Intel 7 (formerly Intel 10nm), or Intel 4 (formerly Intel 7nm and the least likely). The original Habana Gaudi processors were built on the 16nm TSMC process which makes it more likely for this chip to be on N7 or Intel 7. Whatever the case is, considering the Gaudi 2 platform is clearly on a far smaller node than 16nm (which in itself gives a density increase of roughly 50%), and combined with the die size increase, we are looking at an absolute beast of a processor which should easily go toe to toe with NVIDIA’s upcoming Hopper datacenter GPU – in terms of performance per dollar – and maybe even in terms of absolute performance.

Intel’s CEO Pat Gelsinger has previously hinted at a “very aggressive path” for its Habana AI arm:

‘We are playing offense, not defense’..

We also have, with our Habana product line [a specialized A.I. chipmaker Intel bought in 2019], unquestionably laid out a very aggressive path and our cloud partnership with [hotlink]Amazon[/hotlink] is a great demonstration of that. So clearly, I’d say the idea of CPUs is Intel’s provenance. We’re now building A.I. into that and we expect this to be an area where we are on the offense, not the defense going forward.

Since being acquired by Intel, Habana Labs would have had a lot more resources to play with and it looks like the company is getting ready to get serious about DL/ML applications. Intel finally has a very real product roadmap that could fend off – and even take marketshare from NVIDIA.